Data Validation Best Practice Guide

Quality data is critical for identifying performance gaps, key industry trends, and revenue opportunities. Since the data wave, businesses have shifted toward unstructured and semi-structured data, increasing the need for BI processing and validation. Validating your data provides the following benefits:

- Access to reliable data on-demand

- Minimized effort, cost, and errors

- Increased competitive edge with more accurate insights

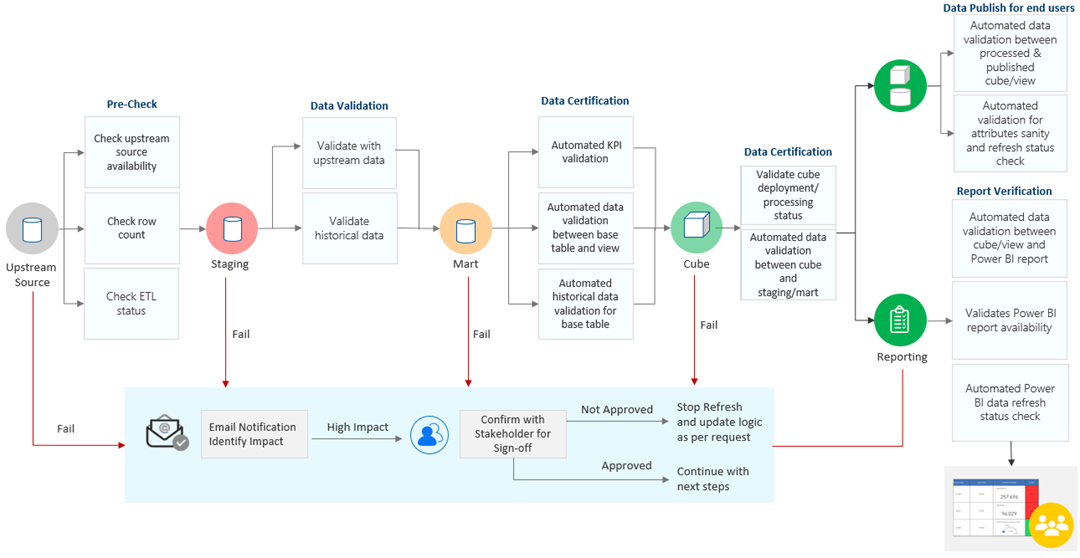

At MAQ Software, we’re committed to sharing our expertise with growing businesses. Our exclusive data validation framework can be implemented in any BI project with 100% accuracy for descriptive, prescriptive, and other insights.

- Inventory upstream data sources

- Perform data staging

- Push to data mart

- Validate data staging and mart

- Publish data for end users

- Track tabular or multidimensional model performance

- Verify BI reporting

Ensure upstream data is accessible. Record the number of data records to identify if data is lost or duplicated during the next steps.

Identify data with a unique combination of attributes. Perform a trend analysis of key attributes. Examples: the rate of attribute changes or the rate of data changes period over period.

If using Azure Databricks (ADB), process notebooks in parallel (versus sequentially) to reduce refresh time. Identify platform run failures. If using tabular models, cross-check the data consistency between data mart and tabular models. For non-cloud environment where SSIS or other ETL tools might be used, implement similar checks for staging against data mart publish. Example: data profiling in SSI

Perform build verification testing (BVT), table-level BVT, and BVT for multiple sources.

Validate the data to ensure data was not lost or duplicated while being pulled from databases. Example: Azure Data Warehouse (ADW) dump failure tracking. Check for data loss when changing the schema from temporary format to end user agreed schema format. Track downstream user data usage and remove unused tables/views to improve report performance.

Track measure execution time to detect time lags. Remove unused reporting measures. Check all tabular/multidimensional columns to prevent failure while processing data. Compare previous tabular/multidimensional refreshes with the current refresh for sudden drop/increase in the number of records processed beyond a predefined threshold limit.

Import vs. tabular model validator: compare consistency of datapoints rendered in BI report through import model and tabular. Track BI report usage to understand the number of visitors across pages, active users, and historical data about report usage patterns.

More Resources

- Check out how our data validation framework reduced our client’s support tickets